Visible to Intel only — GUID: GUID-EC631EF3-0E74-4F55-AEFF-1E8D4C6C18B6

Visible to Intel only — GUID: GUID-EC631EF3-0E74-4F55-AEFF-1E8D4C6C18B6

Group Normalization

General

The group normalization primitive performs a forward or backward group normalization operation on tensors with numbers of dimensions equal to 3 or more.

Forward

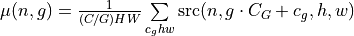

The group normalization operation is defined by the following formulas. We show formulas only for 2D spatial data which are straightforward to generalize to cases of higher and lower dimensions. Variable names follow the standard Naming Conventions.

where

,

, ,

, are optional scale and shift for a channel (see dnnl_use_scale and dnnl_use_shift flags),

are optional scale and shift for a channel (see dnnl_use_scale and dnnl_use_shift flags), are mean and variance for a group of channels in a batch (see dnnl_use_global_stats flag), and

are mean and variance for a group of channels in a batch (see dnnl_use_global_stats flag), and is a constant to improve numerical stability.

is a constant to improve numerical stability.

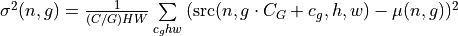

Mean and variance are computed at runtime or provided by a user. When mean and variance are computed at runtime, the following formulas are used:

,

, .

.

The  and

and  tensors are considered learnable.

tensors are considered learnable.

- The group normalization primitive computes population mean and variance and not the sample or unbiased versions that are typically used to compute running mean and variance.

Using the mean and variance computed by the group normalization primitive, running mean and variance

and

and  can be computed as

can be computed as

Difference Between Forward Training and Forward Inference

If mean and variance are computed at runtime (i.e., dnnl_use_global_stats is not set), they become outputs for the propagation kind dnnl_forward_training (because they would be required during the backward propagation) and are not exposed for the propagation kind dnnl_forward_inference.

Backward

The backward propagation computes  ,

,  , and

, and  based on

based on  ,

,  ,

,  ,

,  ,

,  , and

, and  .

.

The tensors marked with an asterisk are used only when the primitive is configured to use  and

and  (i.e., dnnl_use_scale or dnnl_use_shift are set).

(i.e., dnnl_use_scale or dnnl_use_shift are set).

Execution Arguments

Depending on the flags and propagation kind, the group normalization primitive requires different inputs and outputs. For clarity, a summary is shown below.

Flags |

||||

|---|---|---|---|---|

Inputs : |

Inputs : |

Inputs : |

Same as for dnnl_backward |

|

Inputs : |

Inputs : |

Inputs : |

Same as for dnnl_backward |

|

Inputs : |

Inputs : |

Inputs : |

Not supported |

|

Inputs : |

Inputs : |

Inputs : |

Not supported |

|

Inputs : |

Inputs : |

Inputs : |

Not supported |

When executed, the inputs and outputs should be mapped to an execution argument index as specified by the following table.

Primitive Input/Output |

Execution Argument Index |

|---|---|

|

DNNL_ARG_SRC |

|

DNNL_ARG_SCALE |

|

DNNL_ARG_SHIFT |

mean ( |

DNNL_ARG_MEAN |

variance ( |

DNNL_ARG_VARIANCE |

|

DNNL_ARG_DST |

|

DNNL_ARG_DIFF_DST |

|

DNNL_ARG_DIFF_SRC |

|

DNNL_ARG_DIFF_SCALE |

|

DNNL_ARG_DIFF_SHIFT |

|

DNNL_ARG_ATTR_MULTIPLE_POST_OP(binary_post_op_position) | DNNL_ARG_SRC_1 |

Implementation Details

General Notes

The different flavors of the primitive are partially controlled by the flags parameter that is passed to the primitive descriptor creation function (e.g., dnnl::group_normalization_forward::primitive_desc()). Multiple flags can be set using the bitwise OR operator (|).

For forward propagation, the mean and variance might be either computed at runtime (in which case they are outputs of the primitive) or provided by a user (in which case they are inputs). In the latter case, a user must set the dnnl_use_global_stats flag. For the backward propagation, the mean and variance are always input parameters.

Both forward and backward propagation support in-place operations, meaning that

can be used as input and output for forward propagation, and

can be used as input and output for forward propagation, and  can be used as input and output for backward propagation. In case of an in-place operation, the original data will be overwritten. Note, however, that backward propagation requires the original

can be used as input and output for backward propagation. In case of an in-place operation, the original data will be overwritten. Note, however, that backward propagation requires the original  , hence the corresponding forward propagation should not be performed in-place.

, hence the corresponding forward propagation should not be performed in-place.

Data Type Support

The operation supports the following combinations of data types:

Propagation |

Source / Destination |

Mean / Variance / ScaleShift |

|---|---|---|

forward / backward |

f32, bf16, f16 |

f32 |

forward |

s8 |

f32 |

Data Representation

Mean and Variance

The mean ( ) and variance (

) and variance ( ) are separate 2D tensors of size

) are separate 2D tensors of size  .

.

The format of the corresponding memory object must be dnnl_nc (dnnl_ab).

Scale and Shift

If dnnl_use_scale or dnnl_use_shift are used, the scale ( ) and shift (

) and shift ( ) are separate 1D tensors of shape

) are separate 1D tensors of shape  .

.

The format of the corresponding memory object must be dnnl_x (dnnl_a).

Source, Destination, and Their Gradients

The group normalization primitive expects data to be  tensor.

tensor.

The group normalization primitive is optimized for the following memory formats:

Spatial |

Logical tensor |

Implementations optimized for memory formats |

|---|---|---|

1D |

NCW |

|

2D |

NCHW |

|

3D |

NCDHW |

dnnl_ncdhw ( dnnl_abcde ), dnnl_ndhwc ( dnnl_acdeb ) |

Post-Ops and Attributes

Attributes enable you to modify the behavior of the group normalization primitive. The following attributes are supported by the group normalization primitive:

Propagation |

Type |

Operation |

Description |

Restrictions |

|---|---|---|---|---|

forward |

attribute |

Scales the corresponding tensor by the given scale factor(s). |

Supported only for int8 group normalization and one scale per tensor is supported. |

|

forward |

Post-op |

Applies a Binary operation to the result |

General binary post-op restrictions |

|

forward |

Post-op |

Applies an Eltwise operation to the result. |

Implementation Limitations

Refer to Data Types for limitations related to data types support.

Performance Tips

Mixing different formats for inputs and outputs is functionally supported but leads to highly suboptimal performance.

Use in-place operations whenever possible (see caveats in General Notes).

Examples

Group Normalization Primitive Example

This C++ API example demonstrates how to create and execute a Group Normalization primitive in forward training propagation mode.

Key optimizations included in this example:

In-place primitive execution;

Source memory format for an optimized primitive implementation;