A newer version of this document is available. Customers should click here to go to the newest version.

Executing Multiple Kernels on the Device at the Same Time

SYCL has two kinds of queues that a programmer can create and use to submit kernels for execution.

-

- in-order queues

-

where kernels are executed in the order they were submitted to the queue

-

- out-of-order queues

-

where kernels can be executed in an arbitrary order (subject to the dependency constraints among them).

The choice to create an in-order or out-of-order queue is made at queue construction time through the property sycl::property::queue::in_order(). By default, when no property is specified, the queue is out-of-order.

In the following example, three kernels are submitted per iteration. Each of these kernels uses only one work-group with 256 work-items. These kernels are created specifically with one group to ensure that they do not use the entire machine. This is done to illustrate the benefit of parallel kernel execution.

int multi_queue(sycl::queue &q, const IntArray &a, const IntArray &b) {

IntArray s1, s2, s3;

sycl::buffer a_buf(a);

sycl::buffer b_buf(b);

sycl::buffer sum_buf1(s1);

sycl::buffer sum_buf2(s2);

sycl::buffer sum_buf3(s3);

size_t num_groups = 1;

size_t wg_size = 256;

auto start = std::chrono::steady_clock::now();

for (int i = 0; i < iter; i++) {

q.submit([&](sycl::handler &h) {

sycl::accessor a_acc(a_buf, h, sycl::read_only);

sycl::accessor b_acc(b_buf, h, sycl::read_only);

sycl::accessor sum_acc(sum_buf1, h, sycl::write_only, sycl::no_init);

h.parallel_for(sycl::nd_range<1>(num_groups * wg_size, wg_size),

[=](sycl::nd_item<1> index) {

size_t loc_id = index.get_local_id();

sum_acc[loc_id] = 0;

for (int j = 0; j < 1000; j++)

for (size_t i = loc_id; i < array_size; i += wg_size) {

sum_acc[loc_id] += a_acc[i] + b_acc[i];

}

});

});

q.submit([&](sycl::handler &h) {

sycl::accessor a_acc(a_buf, h, sycl::read_only);

sycl::accessor b_acc(b_buf, h, sycl::read_only);

sycl::accessor sum_acc(sum_buf2, h, sycl::write_only, sycl::no_init);

h.parallel_for(sycl::nd_range<1>(num_groups * wg_size, wg_size),

[=](sycl::nd_item<1> index) {

size_t loc_id = index.get_local_id();

sum_acc[loc_id] = 0;

for (int j = 0; j < 1000; j++)

for (size_t i = loc_id; i < array_size; i += wg_size) {

sum_acc[loc_id] += a_acc[i] + b_acc[i];

}

});

});

q.submit([&](sycl::handler &h) {

sycl::accessor a_acc(a_buf, h, sycl::read_only);

sycl::accessor b_acc(b_buf, h, sycl::read_only);

sycl::accessor sum_acc(sum_buf3, h, sycl::write_only, sycl::no_init);

h.parallel_for(sycl::nd_range<1>(num_groups * wg_size, wg_size),

[=](sycl::nd_item<1> index) {

size_t loc_id = index.get_local_id();

sum_acc[loc_id] = 0;

for (int j = 0; j < 1000; j++)

for (size_t i = loc_id; i < array_size; i += wg_size) {

sum_acc[loc_id] += a_acc[i] + b_acc[i];

}

});

});

}

q.wait();

auto end = std::chrono::steady_clock::now();

std::cout << "multi_queue completed on device - took "

<< (end - start).count() << " u-secs\n";

// check results

return ((end - start).count());

} // end multi_queue

In the case where the underlying queue is in-order, these kernels cannot be executed in parallel and have to be executed sequentially even though there are adequate resources in the machine and there are no dependencies among the kernels. This can be seen from the larger total execution time for all the kernels. The creation of the queue and the kernel submission is shown below.

sycl::property_list q_prop{sycl::property::queue::in_order()};

std::cout << "In order queue: Jitting+Execution time\n";

sycl::queue q1(sycl::default_selector_v, q_prop);

multi_queue(q1, a, b);

usleep(500 * 1000);

std::cout << "In order queue: Execution time\n";

multi_queue(q1, a, b);

When the queue is out-of-order, the overall execution time is much lower, indicating that the machine is able to execute different kernels from the queue at the same time. The creation of the queue and the invocation of the kernel is shown below.

sycl::queue q2(sycl::default_selector_v); std::cout << "Out of order queue: Jitting+Execution time\n"; multi_queue(q2, a, b); usleep(500 * 1000); std::cout << "Out of order queue: Execution time\n"; multi_queue(q2, a, b);

In situations where kernels do not scale strongly and therefore cannot effectively utilize full machine compute resources, it is better to allocate only the required compute units through appropriate selection of work-group/work-item values and try to execute multiple kernels at the same time.

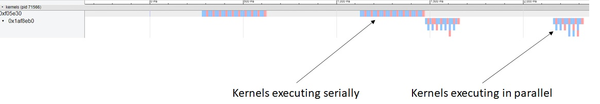

The following timeline view shows the kernels being executed by in-order and out-of-order queues (this was collected using the onetrace tool available at https://github.com/intel/pti-gpu/tree/master/tools/onetrace). Here one can clearly see that kernels submitted to the out-of-order queue are being executed in parallel. Another thing to notice is that not all three kernels are executed in parallel all the time. How many kernels are executed in parallel is affected by multiple factors such as the availability of hardware resources, the time gap between kernel submissions, etc.

It is also possible to statically partition a single device into sub-devices through the use of create_sub_devices function of device class. This provides more control to the programmer for submitting kernels to an appropriate sub-device. However, the partition of a device into sub-devices is static, so the runtime will not be able to adapt to the dynamic load of an application because it does not have flexibility to move kernels from one sub-device to another.